I want to take the opportunity to fire up my blog with some fast and narrow introduction to Azure Functions for all of us cloud nerds out there. This is something I really love to use in my day to day work or if I just want to have a little fun exploring new possibilities. The title feels appropriate in times of the Valentines Day.

The journey towards Node.js, unified APIs and JavaScript supported frameworks in Azure and Office 365-services is a true blessing for me, since I have a background in web-development and love to read upon new front-end technology. It gives us developers so much alternatives and choices. Furthermore the era of server-less applications (in a Microsoft Context, Azure Functions), gives us the possibility to focus more on functionality and to deliver value instead of infrastructural related issues or concerns. Azure Functions allow us to write smaller applications with a clear and defined scope of purpose, best of all, use your favorite language of your flavor.

I have myself used Azure functions for a lot of different purposes and the language of my choosing depends on what I want to achieve. When working towards Office 365 services I tend to use a mix of Node.js and PowerShell driven functions to be able to overcome some of the limitations each and everyone have. I strive to do as much as possible with the graph APIs available for us.

Here is a list of supported Languages right now:

| Language | 1.x | 2.x |

|---|---|---|

| C# | GA (.NET Framework 4.7) | GA (.NET Core 2) |

| JavaScript | GA (Node 6) | GA (Node 8 & 10) |

| F# | GA (.NET Framework 4.7) | GA (.NET Core 2) |

| Java | N/A | Preview (Java 8) |

| Python | Experimental | Preview (Python 3.6) |

| TypeScript | Experimental | Supported through transpiling to JavaScript |

| PHP | Experimental | N/A |

| Batch (.cmd, .bat) | Experimental | N/A |

| Bash | Experimental | N/A |

| PowerShell | Experimental | N/A |

| Supported Languages | ||

As you can see PowerShell is listed as Experimental, the need for Powershell functions is obvious I think, but it remains to see if we get any more definite news about this. If you know anything please ping me :-)

So what about triggers?

The beauty of the Azure Functions is that you can trigger the execution of your function in a bunch of different ways, I have mostly done implementations with Timer-Triggers and HTTP-Triggers. Which has been more than enough for my current needs. I like to combine the HTTP-triggers with other services such as Logic Apps or Flow to create and implement workflows or processes.

HTTPTrigger - Trigger the execution of your code by using an HTTP request. For an example, see Create your first function.

TimerTrigger - Execute cleanup or other batch tasks on a predefined schedule. For an example, see Create a function triggered by a timer.

If you want to read more about Azure Functions, take a minute to develop your understanding of this valuable Azure Service here.

What about the Cost?

The best of all with Azure Functions is that, thanks to the server-less design, it allows you as a developer to quickly come up with a solution, in a easy manner can test your logic and be up and running in no time. Azure functions can also become a great upside when it comes to the economical side of things due to the fact that it gives you the possibility to pay only for the functions executed runs through a so called Consumption Plan.

Consumption plan - When your function runs, Azure provides all of the necessary computational resources. You don't have to worry about resource management, and you only pay for the time that your code runs.

App Service plan - Run your functions just like your web apps. When you are already using App Service for your other applications, you can run your functions on the same plan at no additional cost.

Think about the following:

Are you going to implement a time-consuming or a long-running operation, you are probably doing yourself a favor investigating the difference in cost of these two options, you would want to have a short execution time for each run if using the consumption plan.

Are the Azure Function going to be serving as a business critical solution / API and with high demands of short response time? Then think about that the server-less architecture in Azure Function means possible problems with cold-starts. This means that a function that has been idle for a longer time, in my experience more than 4 minutes, needs to be deployed and allocated to Azure infrastructure once again before the execution, which can lead to delays before an execution (e.g. 10 seconds).

As stated above, if an app service plan is already deployed in the Azure environment that fits your Azure Function needs you can simply use that service plan, upsides of the app service plan is that your Azure Functions is running on the VM's defined in the App service plan instead of an unspecified infrastructure served by Azure. No extra cost and no cold-start issues. With that said, there is ways to mitigate cold-starts even in a consumption-plan.

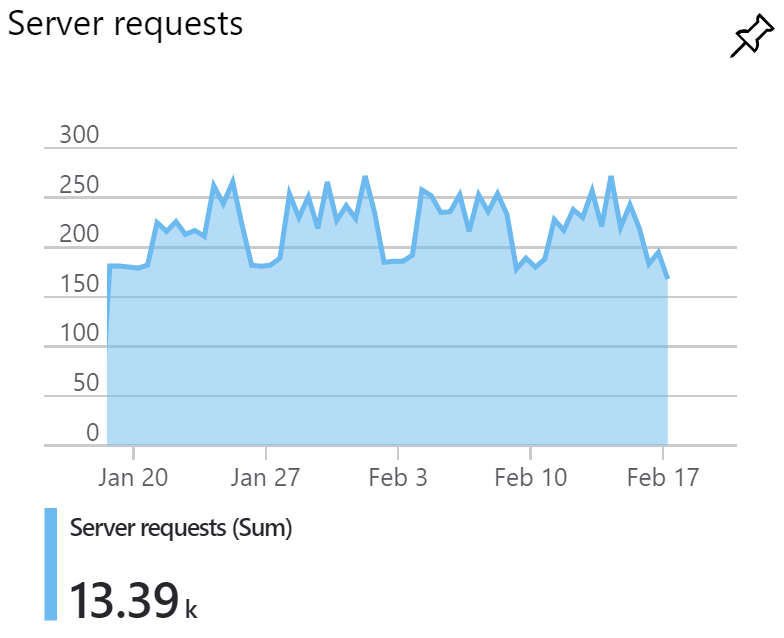

I want to add as well that I have chosen to go with the consumption plans in most of my implementations due to the low-cost generated over time. A good way to keep track of the overall pattern of requests made against your functions is to enable Application insight for your function. It gives you a good way of following response time, request rates, errors and exceptions.

Durable Functions

As an extension to Azure Functions we also have the possibility to implement Durable Functions. What is that?

Durable Functions are an extension of Azure Functions that lets you write stateful functions in a serverless environment. The extension manages state, checkpoints, and restarts for you.

In this way we can orchestrate functions to be called both synchronously and asynchronously, as a bonus, state can be tracked between executions and maintained even after VM reboots or process recycles. Patterns used in durable functions is for instance Chaining, e.g. sequential execution of functions or Fan out/in where functions is executed in parallel and is finalized when all of the functions is reported done.

Durable Functions is super interesting and is something I want to lab more with to fully understand the potential. As of now it feels kind of awry to do big implementations in functions, there is so many ways of implementing solutions, different services, different platforms etc. What is your experience with durable functions?

Summary of considerations

- What's your requirements regarding

- response time

- number of requests

- business critical? - Evaluate suitable plan for your needs

- do we have an existing app service plan we can utilize?

- since consumption plan carries away the cost with execution time and number of requests, does the need fit the consumption plan? - Enable Application Insight for your Azure Function

Get Started writing your functions today! Use your favorite language and try things out, integrate your functions with Office 365, Logic apps or other Azure services to fully understand the power of this service.

Resources

An introduction to Azure Functions

Understanding server-less cold start

Azure Function Pricing

What is application insights

Durable Functions - Overview